ANSYS 部落格

December 3, 2021

如何為自動駕駛車輛選擇適合的感應器

全球第一輛「電機車」在 1885 年推出,未來車輛可自己行駛的概念,在當時受到嘲笑。輔助與自動駕駛車輛在現在這個時代已成為現實,數位感應器已可超越人類對於移動、距離與速度的感知能力。

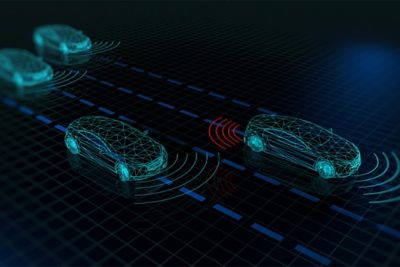

結合攝影機、光學雷達、雷達和超音波等感應器技術時,車輛將只需少數甚至無需人類介入,便能完全掌握周遭環境安全地行駛。

但對工程師和設計師來說,若想找到感應器的適當組合來滿足終端使用者對安全性、功能效能和價格等需求,就必須謹慎考量每種感應器類型的角色、功能和限制。

車輛中的感應器應用範例包括:

- 自動緊急煞車

- 盲點警告

- 車道偏離警告

- 車道置中

- 自動調整巡航控制

- 塞車自動駕駛

- 無人駕駛計程車與送貨

自主感應器有哪四種類型?

1.攝影機

高解析度數位攝影機可協助車輛「看見」其環境,並解讀周遭環境。若在車輛周遭安裝多部攝影機,360° 檢視功能可讓車輛偵測鄰近物體,例如其他車輛、行人、道路標示和交通號誌。

您可考慮幾種攝影機來滿足不同設計需求,包括 NIR 攝影機、VIS 攝影機、熱顯像攝影機和飛時測距攝影機。就像大部分的感應器一樣,若能搭配使用不同攝影機,將可得到最佳效果。

- NIR 攝影機:仰賴可見範圍以外光線的近紅外線攝影機,通常會與 NIR 發射器 (如 LED) 結合

- VIS 攝影機:依據可見光的反射來辨識物體

- 熱顯像攝影機:以物體放射的紅外線能量來偵測物體

- 飛時測距攝影機:測量攝影機與主體間的距離

攝影機非常適合運用在操控和停車、車道偏離等情境,並能辨識駕駛人分心的情況。

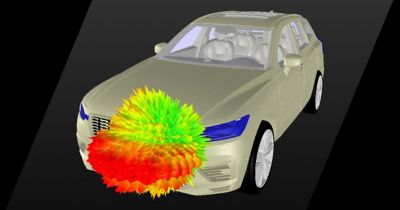

2.光學雷達

光學雷達的全名為「光偵測與測距」,是一種使用光脈衝掃描環境並產生 3D 複本的遠端感應器技術。其運作原理與聲納相同,只是光學雷達使用的是光線而非聲波。在自動駕駛車輛中,光學雷達會即時掃描周遭環境,讓車輛能夠避免發生碰撞。

光學雷達元件:

- 發射器:將脈衝光波發射到環境中並進行掃描,透過測量光從物體反射回來的時間,來判斷距離和深度

- 接收器:擷取反射光波以瞭解特定物體的形狀、大小、速度和距離

- 訊號處理:編譯與解譯資料

光學雷達能精確地感知深度,並判斷物體是否存在。光學雷達可在長距離或夜間、下雨和起霧等惡劣環境條件下看到物體。 由於此技術能辨識並分類看到的物體,因此能判斷松鼠和石頭等物體間的差異,並據以預測行為。

3.雷達

雷達的全名是「無線電偵測與測距」。這種感應器會以電磁波形式發射短脈衝,以偵測環境中的物體。電磁波碰到物體後,將會反射並彈回感應器。在自動駕駛車輛中,雷達可用來辨識其他車輛和大型障礙物。

雷達元件:

- 發射機:將無線電訊號導向識別的方向

- 接收器:在無線電波自物體反射時擷取無線電波

- 介面:將無線電資料轉譯為適合駕駛的資訊

由於雷達不需依賴光,因此在任何天氣狀況下都能發揮良好效用,最常應用在巡航控制和防撞系統上。

4.超音波

雷達使用無線電波,光學雷達使用光脈衝,超音波感應器則透過送出短超音波脈衝再反射回感應器,來評估環境中的物體。此技術非常符合成本效益也適合偵測固體危險,通常運用在汽車保險桿上,以在停車時警告駕駛有障礙物。為達到輔助駕駛應用最佳效果,超音波感應器通常會與攝影機結合使用。

趣味知識:許多最厲害的超音波感應器其實存在大自然中。蝙蝠、海豚和獨角鯨都使用超音波來辨識物體 (回聲定位)。

各感應器比較

| 攝影機 | 雷達 | 超音波 | 光學雷達 | |

| 優點 | 高解析度彩色影像、高度細膩且逼真 | 3D 資訊、輕巧、長範圍 | 輕巧、無移動零件、不受光線或天候影響 | 不受天候影響、在長距離範圍下也可提供高解析度 |

| 缺點 | 天候不佳和光線不足時效能較差 | 低解析度、無彩色 | 範圍有限 | 無彩色、價格 |

| 成本 | 價格實惠 | 價格實惠 | 價格實惠 | 價格昂貴 |

如何選擇適合的感應器組合

每種感應器各有其優點,但要實現輔助駕駛,必須透過感應器資訊的互動。隨著車輛朝完全自主方向邁進,選擇適合的感應器組合對於達到自主所需的安全標準來說,也成為更重要的課題。

為了獲得最高等級的安全與效能,攝影機、雷達、光學雷達與超音波間的感應器融合可最大程度地發揮各種感應器類型的優點,同時補償其他感應器的弱點。舉例來說,只有光學雷達的情況下車道追蹤效果並不好,但若結合光學雷達與攝影機,則可得到很好的效果。

適合的組合取決於幾個因素:

- 所需的感應器功能是什麼?例如:緊急煞車、防撞、停車輔助

- 此功能的需求為何?例如:需要偵測 100 公尺處的車輛

- 功能可能會遇到哪些極端案例?例如:不良天候 (起霧等)

- 駕駛所需功能為輔助還是自動化?

- 哪種感應器組可提供最經濟實惠的價格?

- 感應器所需數量會如何影響整體設計?

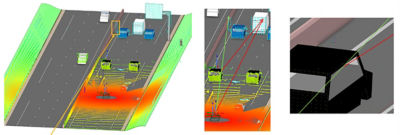

使用模擬測試感應器組合

為確保原型車輛準備就緒,感應器的設計必須具備各式各樣的測試案例。

Ansys 模擬平台為攝影機、光學雷達、雷達和超音波感應器即時提供真實物理式感應器反應,讓工程師能夠得到驗證自動駕駛系統設計安全所需的所有資訊

您可在開始探索設計時使用 Ansys 模擬,精確地瞭解各種感應器組合在現實世界中的表現。接著可根據您的目標,評估適合專案的感應器組合。

若想深入瞭解如何運用物理式感應器模擬,讓自動駕駛車輛更加安全,歡迎觀看我們的隨選網路研討會:大規模運用物理式感應器模擬,讓自動駕駛車輛更安全。