ANSYS BLOG

February 22, 2023

Vehicle Connectivity: Bridging AI and Autonomous Vehicles.

Connecting information via high-speed wireless network infrastructure so data can be analyzed and shared could very well drive the future of autonomous vehicles. Several factors must be considered to reach high levels of autonomy, including advanced sensor technology, precise determinization of vehicle location, up-to-date mapping information, local perception of other vehicles and pedestrians, and planning and decision making. To give you an idea of the magnitude of these interactions, in 2019, there were 31 million vehicles operating with some level of automation. By 2025, almost 60% of global new vehicle sales will function at level two autonomy.1

At this level, we’ve made limited inroads to full autonomy, a huge computational task that will no doubt involve numerous in-vehicle applications requiring near real-time response. All this activity will be coordinated by artifical intelligence (AI) and a high level of connectivity supported by simulation at every turn — from safety validation to real-world sensor and antenna performance verification. But one thing is for sure: Advanced simulation of vehicle connectivity, sensor perception, sharing of information, and training AI decision making will have a huge impact on the ultimate delivery of autonomous vehicles.

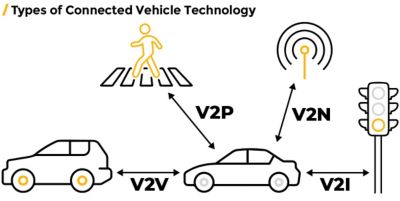

Some examples of the various types of connected vehicle technology responsible for on-road interactions, namely vehicle to vehicle (V2V), vehicle to pedestrian (V2P), vehicle to network (V2N) and vehicle to infrastructure (V2I).

Vehicle Connectivity: The Bridge Between AI and AV

Many conversations need to happen between an autonomous vehicle (AV) and other elements within a self-driving ecosystem, enabled by vehicle-to-vehicle (V2V), vehicle-to-infrastructure (V2I), vehicle-to-network (V2N), vehicle-to-people (V2P), and vehicle-to-everything (V2X) smart technologies to ensure safety. All these smart technologies rely on consistent low latency connectivity to expand perception beyond what is directly in front of the vehicle. This is especially true in dense 5G-and-beyond telecommunication environments of the future, where a lot of information can be exchanged.

“Fully autonomous environments of the future will all be dictated by a larger communication grid coordinating vehicle movement,” says Christophe Bianchi, Chief Technologist at Ansys. “The vehicle in the city talks to the city, talks to the other vehicles, and all of that involves a mission going from one place to another safely. So, how do you simulate all the events, everything that's happening in the environment, plus all the communication required, including the quality of signals between all these different parameters? That’s a mission for digital mission engineering software.”

How Autotalks Leverages Vehicle Connectivity for Bicyclist Safety

Autotalks, a small apparatus that connects to the handlebar of your bicycle to avoid the risk of collision, is just one example of V2X connectivity in action. This system connects to vehicles and related vehicle infrastructure as part of a broader mobility ecosystem, so that all vehicles, including bikes, will be connected and talking to the infrastructure and each other, monitoring traffic and sharing critical information in real time.

Reliable service and coverage are the most important metrics the success of Autotalks, and other connected tech, will be measured by. Simulation enables 5G designers to achieve these objectives by helping them accurately model the real-world performance of millimeter-wave and beam-forming antennas, optimizing the power, performance, and cost of mixed-signal system-on-chips (SoC) and application processors. It also increases product reliability through chip-package-system, electro-thermal, and thermo-mechanical analysis.

“Ansys brings reliability and performance to the entire 5G ecosystem from devices to networks to data centers,” says Dr. Larry Williams, Distinguished Engineer at Ansys. “Fortune 500 high-tech companies, including the leading 5G players, are using our semiconductor and multiphysics simulation tools and workflows to deliver 5G connectivity on a large scale.”

Ansys modeling and simulation solutions enable V2X communication for autonomous vehicles. Pictured above, when a car arrives at an intersection, the car is tasked to figure out quickly the traffic light is about to turn (V2I) and the intention of other cars (V2V) so that the vehicle can compute the safest avoidance maneuver. The blobs represent the radiation patterns of the various antennas as they appear in Ansys HFSS.

Artificial Intelligence: The Brains of the Applications

AI and machine learning (ML) are vital to the advancement of self-driving technology, with the combination of vast datasets and rule-based systems enhancing performance. These technologies calculate risk scores for driving maneuvers, enabling decision-making in automotive systems.

However, Jay Pathak, Senior Director of R&D at Ansys, acknowledges a significant challenge in automotive AI/ML, "The discovery of those rules becomes a hard problem when the data is not covering the entire space correctly." The road's multi-factor environment complicates data collection for each variable independently, presenting a major difficulty for the machine learning community in this sector.

Despite advancements with original equipment manufacturers (OEMs) like Mercedes Benz, we still lack comprehensive data for full autonomy. Future progress will require shifting focus to useful data and leveraging unsupervised learning methods, simulations, and real-world sensor data for more dynamic and challenging datasets.

Simulations and nonlinear solvers are crucial to this effort, allowing the break down of autonomous vehicle functionality into subtasks, informed by data from various sources like lidar, radars, and sensors.

AI/ML is also instrumental in annotating real-world driving maps and sensor data, improving the robustness and safety of perception outcomes through accurate training and stress testing.

Looking towards full autonomy, future vehicles will need structural changes to accommodate data-gathering systems and integrated sensors. Factors such as a radar's ability to transmit signals through vehicle materials can be efficiently analyzed in virtual simulation environments.

The adoption of driverless technology will cause a paradigm shift in the industry, from vehicle ownership to usership. With this realization, OEMs and mobility suppliers are looking to create recurring revenue streams through more detailed service models. Constant location and schedule sharing of the customer/driver, through vehicle connectivity, can lead to personalized service recommendations from vendors, consequently unlocking additional revenue streams and opportunities.

3D electromagnetic wave simulation helps ensure uninterrupted V2V and V2I communication.

On the Road to Making New Connections and Learning New Things with Ansys

Behind every on-road communication and every cloud-based data exchange is a corresponding Ansys solution.

- Ansys Digital Mission Engineering includes ready-to-use desktop applications, developer tools, and enterprise software that combines digital modeling, simulation, testing, and analysis to evaluate mission outcomes at every phase of a system’s life cycle.

- Ansys HFSS is a 3D electromagnetic (EM) simulation software for designing and simulating high-frequency electronic products such as antennas, antenna arrays, RF or microwave components, high-speed interconnects, filters, connectors, IC packages and printed circuit boards.

- Ansys LS-DYNA is a general-purpose finite element software for simulating complex structural problems, specializing in nonlinear, transient dynamic problems using explicit integration. It provides fully automated contact analysis and a wide range of material models with industry-leading capabilities for drop checks, impact and penetration, smashes and collisions, occupant protection, and other applications.

- Ansys AVxcelerate Sensors readily integrates the simulation of ground-truth sensors including camera, radar and lidar to virtually assess complex ADAS systems and autonomous vehicles.

- Ansys optiSlang is a process integration and design optimization solution that automates key aspects of the robust design optimization process. For automated driving function validation, optiSLang reduces the number of simulations required by a factor of 1,000 and provides robust reliability and analysis and evaluation for parameterized driving scenarios.

- Synopsys Redhawk-SC is a complete solution for analyzing multi-die chip packages and interconnects for power integrity, layout parasitic extraction, thermal profiling, thermo-mechanical stress, and signal integrity.

These products are all part of our broader portfolio for advancing transportation and mobility innovation.

References

- Autonomous Vehicles World Wide - Statistics & Facts, statista.com, September 23, 2022.