Ansys博客

February 24, 2022

自动驾驶汽车如何在雪天“看见”路况?

为了安全行驶,自动驾驶汽车首先必须“看见”周围的世界。通过摄像头、激光雷达和雷达传感器的集成系统,自动驾驶汽车不断扫描环境,以做出明智的决策。但是,当自动驾驶传感器被雪遮挡会发生什么?

虽然雨和雾也是自动驾驶汽车面临的恶劣天气条件,但降雪的随机模式、每片雪花的特性以及雪花之间的不同距离,使得汽车在下雪天面临特别的挑战。如果传感器和障碍物之间出现粘滞、寒冷、混乱的积雪,汽车做出适当反应的能力可能会大打折扣。

根据美国联邦公路管理局的数据显示,近70%的美国人口生活在多雪地区。虽然加利福尼亚州有适合自动驾驶汽车的理想天气,但现实情况是,自动驾驶汽车必须克服在雪天驾驶的复杂性,才能在大多数美国消费者中普及。

在Ansys Speos中使用3D纹理生成雪花,用于分析其光学特性。

为什么雪会给自动驾驶传感器带来很大困难?

当感知算法试图处理传感器信号中的信息时,雪会导致其置信度下降。其结果是无法探测到接近的物体,或错误地探测到实际不存在的物体。

自动驾驶传感器在雪天面临的挑战包括:

- 雪与其他物体之间缺乏对比度,导致物体无法被探测到

- 雪的散射可能会导致错误识别物体的位置、距离或角度

- 来自多个传感器的输入必须就其“看见”的路况达成一致,但雪会使不同类型的传感器呈现出不同的问题,这会影响传感器的共识

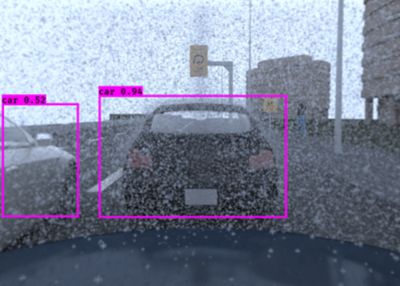

由于对比度降低,雪会降低对侧方车辆的探测。

雪天的传感器精度会发生什么变化?

不同的传感器在应对雪天方面有不同的缺陷:

摄像头:能见度和对比度降低

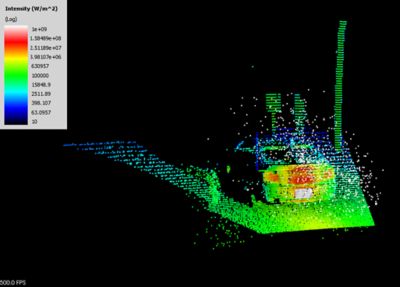

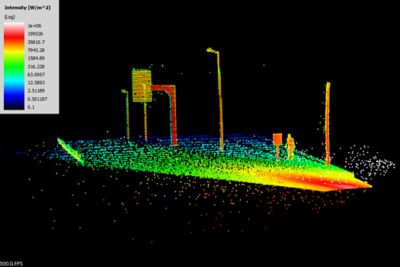

激光雷达:信号散射、吸收和衰减,感知算法的低质量数据和虚假结果

雷达:可以探测物体,但无法正确分类

自动驾驶汽车的防风雨措施:工程师如何测试新的解决方案

为了让自动驾驶汽车能够应对雪天的不可预测性,工程师必须针对无数的驾驶场景测试他们的设计。对于测试,他们有以下几个选择:

气象实验室:这些气候控制设施提供可重复的天气数据,但不考虑其他车辆和道路上的动态条件对天气的影响。

道路测试:在安全路段驾驶汽车进行测试,将自动驾驶系统暴露在真实的天气条件下,但它不能用于实现快速的技术开发。

仿真:数字测试创建了具有真实世界准确性的无限模型,从而减少了制作物理原型的时间和成本,并且无需大自然天气环境的配合。

仿真如何帮助自动驾驶汽车在雪天“看”得更清楚?

仿真为工程师提供了一种更好的方法来测试和提高自动驾驶汽车在恶劣天气下的行为,使他们能够对无限的天气和场景变量进行建模。借助仿真,无需等待下雪即可进行测试。由于结果几乎可以立即获得,自动驾驶汽车制造商可以更快地开发天气感知型自动驾驶系统。

尽管仿真非常擅长准确预测外太空和深海等恶劣环境下的结果,但雪天带来了独特而复杂的挑战。为了使仿真准确反映雪带来的影响,模型必须考虑每片雪花的形状、大小、位置和光学特性(即水是否清澈或是否含有杂质)。此外,模型还必须考虑行驶路面上积雪区域的位置和形状。

激光雷达(左)和雪天摄像头(右)的远距离、基于物理的传感器仿真。

Ansys Fluent用于对雪进行流体动力学(CFD)仿真计算。此外,Fluent还可用于分析天气引起的传感器污染、液滴撞击和向膜流过渡、起雾与表面冷凝、结霜、结冰以及除冰现象。CFD仿真生成的高保真度、可复制的天气数据可以导出到Ansys Speos中,用于摄像头和激光雷达仿真。

通过在耦合CFD-光学解决方案中使用Fluent,自动驾驶汽车工程团队可以加快其测试速度,自信地集成混合功能传感器设计,并显著增强车辆在各种天气条件下(甚至是下雪天)的感知能力。