ANSYS BLOG

March 23, 2022

Ansys and NVIDIA Collaborate on Building a High-Fidelity AV Sensor Simulation Toolchain

Autonomous vehicle developers can use Ansys sensor models with NVIDIA DRIVE Sim to accelerate AV development and validation.

Today, Ansys and NVIDIA, two powerhouses in high-performance computing and AV simulation, announce their collaboration to accelerate AV development.

Autonomous driving mandates rigorous requirements on sensor modeling, prototyping, and testing. With simulation, AV developers can virtually prototype sensors, understand the strengths and limitations of each sensor modality, and identify optimal configurations for specific AV applications.

NVIDIA DRIVE Sim on Omniverse is a scalable, physically accurate, open, and modular AV simulation platform. NVIDIA's core technologies of RTX, Omniverse, and artificial intelligence (AI) are exposed for partner integration through a powerful and standardized API.

Run large-scale, physically accurate multi-sensor simulation with NVIDIA DRIVE Sim.

Ansys brings deep engineering expertise in multiphysics simulation and product development to this collaboration. Ansys’ AVxcelerate is a collection of powerful tools for high-fidelity physics simulation of camera, radar, and lidar sensors. The simulation can predict radar responses under diverse driving and environmental conditions. This provides precise insights into real-life radar operation and eliminates expensive and time-consuming physical testing.

Ansys camera, radar, and lidar simulation allows AV developers to validate their perception algorithms thoroughly by simulating advanced behaviors in diverse environmental conditions. Ansys radar simulation can simulate multi-modes radar in real time, taking into account complex features such as road surface roughness, vehicle wheels, or pedestrian micro-doppler signatures. Ansys camera simulation can model physically accurate effects of light propagation through media such as lenses and multi-spectral light source interactions with different lab-validated materials.

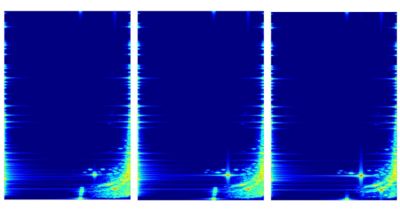

Ansys provides an accurate and efficient means to simulate rough surface backscatter (diffuse scattering) of radar reflections.

By integrating Ansys’s tools on NVIDIA DRIVE Sim’s platform, AV developers bridge the gap between sensor design — where finite element, Monte Carlo ray tracing technology is used — and system validation, where GPU accelerated physics-based models can run up to real-time.

Omniverse connects to a vast ecosystem of partners for content and environments, helping users scale data generation and simulation with unique corner cases and coverage for training and validation of their sensor models.

To learn more about Ansys, check out the NVIDIA’s GPU Technology Conference (GTC) presentation by Jeffrey Decker and Mathieu Reigneau, Lead R&D Engineer and Product Manager at Ansys: “Real-Time Radar and Dynamic Camera Simulation for Complex Autonomous Driving Environments.” You can request a demo of AVxcelerate here.