Quick Specs

Enrich your sensor perception test cases and coverage with accurate real-time synthetic data. Set up multi-sensors simulation in SiL or HiL context toguarantee your ADAS/AV system performance under any operating condition.

Ansys empowers the next generation of engineers

Students get free access to world-class simulation software.

Design your future

Connect with Ansys to explore how simulation can power your next breakthrough.

Students get free access to world-class simulation software.

Connect with Ansys to explore how simulation can power your next breakthrough.

Ansys AVxcelerate realistic sensor testing and validation enables you to test your autonomous vehicles, ADAS and sensors faster than with physical prototypes.

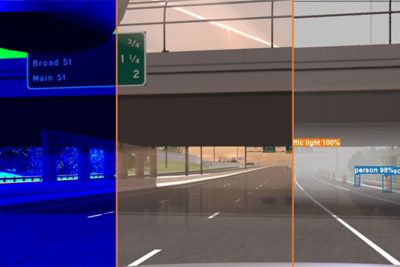

Realistic Driving Scenarios using the Driving Simulator of Your Choice

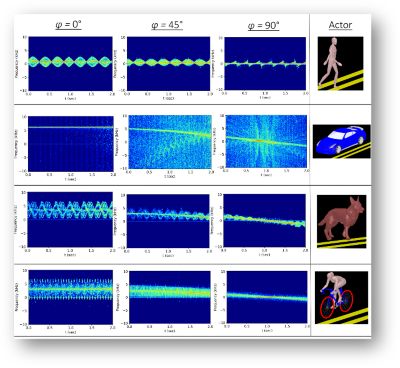

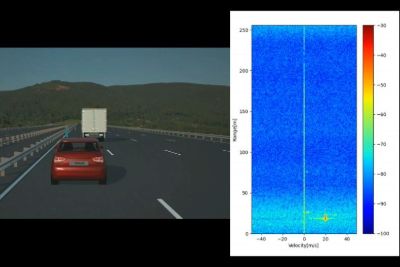

Ansys AVxcelerate Sensors provides physically accurate sensor simulation for autonomous system testing with sensor perception. Save testing time and cost while increasing perception performances for the camera, LiDAR, radar, and thermal camera sensors. Leveraging AVxcelerate's real time capabilities, perform virtual testing in Software-in-the-loop or Hardware-in-the-loop context following the progress of your design cycles.

Enrich your sensor perception test cases and coverage with accurate real-time synthetic data. Set up multi-sensors simulation in SiL or HiL context toguarantee your ADAS/AV system performance under any operating condition.

March 2026

AVxcelerate 26R1 advances a systems-to-silicon approach with NVIDIA Omniverse digital twins, real-time Light Propagation Engine camera simulation, and visual radar tooling for precise sensor modeling. Together with native NCAP scenarios, it delivers higher perception fidelity and rigorous, safety-focused validation for ADAS and autonomous driving systems.

The Light Propagation Engine (LPE) models true light transport to deliver physically accurate, multispectral camera sensing in bright and adverse conditions. With real-time, photorealistic performance, support for 8-Mpix imagers, edge-case weather, and HiL-ready image injection, LPE enables high-confidence, hardware-in-the-loop AVxcelerate sensor validation.

By integrating NVIDIA Omniverse into AVxcelerate, we create a unified, state-of-the-art asset preparation pipeline that dramatically simplifies and accelerates digital twin creation. Engineers can import or generate 3D worlds, apply materials directly from the Ansys multi-spectral database through a modern UI, and prepare assets natively for AVxcelerate simulation.

New tooling in the Sensors Lab streamlines the configuration of complex radar sensors by providing a visual helper that enables efficient positioning and verification of Tx and Rx antennas.

This demo video shows how ANSYS solutions for autonomous vehicles (AVs) can work together to provide a complete solution for designing and simulating AVs, including lidar and sensors, functional safety and safety-critical embedded code — all simulated through virtual reality to reduce physical road testing requirements.

Our camera sensor technology is critical to the work we are doing in supporting autonomous function for our customers. Using Ansys AVxcelerate Sensors during ADAS/ADtesting and validation, we were able to confidently test real-life scenarios thatwere previously off-limitsto us with simulation, withcomplete confidence in theaccuracy of our results. Even though the work to develop a well-roundedsolution is still ongoing, the collaboration between. Ansys AVxcelerate Sensors and Continental camera sensor solutions is already delivering promising results.”

— Dr. Martin Punke Head of Camera Product Technology / Continental

Enrich your sensor perception test cases and coverage with accurate real-time synthetic data. Set up multi-sensors simulation in SiL or HiL context to guarantee your ADAS/AV system performance under any operating condition.

To achieve a high level of accuracy, advanced driver assistance systems/ autonomous driving (ADAS/AD) technology requires Continental to target its camera sensors for simulation. Continental engineers do real-world driving on test tracks or roads to train, test, and validate ADAS or AD systems. They also do component-level testing and simulation; however, only limited engineering simulation solutions are available to tackle this problem. Even though the effort to develop a well-rounded solution is ongoing, the collaboration between Ansys AVxcelerate Sensors and Continental camera sensor solutions delivers promising results.

This demo video shows how ANSYS solutions for autonomous vehicles (AVs) can work together to provide a complete solution for designing and simulating AVs, including lidar and sensors, functional safety and safety-critical embedded code — all simulated through virtual reality to reduce physical road testing requirements.

Ansys AVxcelerate provides physically accurate sensor simulation for autonomous system testing with sensor perception in the loop. Save on testing time and cost while increasing perception performances for camera, lidar, radar and thermal sensors.

Benefit from powerful ray-tracing capabilities to recreate sensor behavior and easily retrieve sensor outputs through a dedicated interface.

AVXCELERATE SENSOR RESOURCES & EVENTS

Join us to hear about new capabilities and features in our 2023 R2 release of Ansys AV Simulation (AVxcelerate). With this release, we introduce improvements in Camera, Thermal Camera & Radar models and the simulation ecosystem to allow users to perform more accurate and much higher fidelity simulations.

For a sustainable business model solution, an intensive trade-off between performance and safety is made in the development of AD systems. Learn how Ansys solutions address critical technical challenges in areas such as sensor and HMI development and system validation.

In-cabin sensing system requirements are increasingly becoming an essential part of governments policies and car safety rating organizations. Learn in-cabin sensing systems requirements andwatch physics-based sensor simulation for in-cabin monitoring systems development andvalidation process.

This demo video shows how ANSYS solutions for autonomous vehicles (AVs) can work together to provide a complete solution for designing and simulating AVs, including lidar and sensors, functional safety and safety-critical embedded code — all simulated through virtual reality to reduce physical road testing requirements.

White Paper

It's vital to Ansys that all users, including those with disabilities, can access our products. As such, we endeavor to follow accessibility requirements based on the US Access Board (Section 508), Web Content Accessibility Guidelines (WCAG), and the current format of the Voluntary Product Accessibility Template (VPAT).

If you're facing engineering challenges, our team is here to assist. With a wealth of experience and a commitment to innovation, we invite you to reach out to us. Let's collaborate to turn your engineering obstacles into opportunities for growth and success. Contact us today to start the conversation.