-

-

Software gratuito per studenti

Ansys potenzia la nuova generazione di ingegneri

Gli studenti hanno accesso gratuito a software di simulazione di livello mondiale.

-

Connettiti subito con Ansys!

Progetta il tuo futuro

Connettiti a Ansys per scoprire come la simulazione può potenziare la tua prossima innovazione.

Paesi e regioni

Customer Center

Supporto

Partner Community

Contatta l'ufficio vendite

Per Stati Uniti e Canada

Accedi

Prove Gratuite

Prodotti & Servizi

Scopri

Chi Siamo

Back

Prodotti & Servizi

Back

Scopri

Ansys potenzia la nuova generazione di ingegneri

Gli studenti hanno accesso gratuito a software di simulazione di livello mondiale.

Back

Chi Siamo

Progetta il tuo futuro

Connettiti a Ansys per scoprire come la simulazione può potenziare la tua prossima innovazione.

Customer Center

Supporto

Partner Community

Contatta l'ufficio vendite

Per Stati Uniti e Canada

Accedi

Prove Gratuite

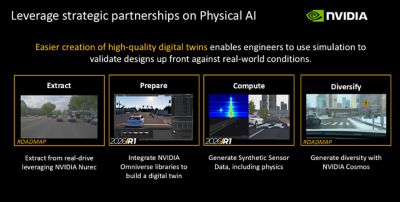

In a previous blog, we explored the value of incorporating NVIDIA Omniverse libraries and microservices to facilitate asset prep and physics-based simulations for physical AI-powered applications into Ansys 2026 R1 AVxcelerate Sensors simulation software.

This time, we’ll explore the value of using novel libraries and world models such as NVIDIA Omniverse NuRec and NVIDIA Cosmos to enhance virtual sensor testing and validation in AVxcelerate Sensors software.

NuRec enables real-word data transformation into high-fidelity 3D environment simulations; while Cosmos delivers greater generative variation diversity of the simulated scenarios used to train and test autonomous driving (AD) systems. Both help ADAS/AD developers, simulation engineers, and AVxcelerate users comprehend more complex edge cases during autonomous vehicle (AV) software stack development.

Extracting the Reality Road Map

Manual 3D modeling can be time- and resource-intensive. Using NuRec with AVxcelerate Sensors software turns this modeling paradigm on its head in the automation of a creative process intended to deliver scalable, high-fidelity 3D environments for simulations faster.

NuRec is a set of libraries for neural reconstruction and rendering that facilitates the transformation of raw, multimodal sensor recordings such as camera streams, lidar point clouds, and egomotion (the 3D motion of a camera in an environment) data, into rich, simulation-ready environments. Data is gathered from real-world driving scenarios, then converted into digital environments that can be used for advanced AV testing.

This set of tools uses modern neural techniques including 3D Gaussian Splatting (3DGS) to represent scenes as collections of anisotropic Gaussians that capture both geometry and appearance of an environment and its nuanced details, such as colors or textures, for a higher level of visual accuracy in recreated digital scenes. NuRec’s seamless integration into existing workflows affords engineers the ability to process real driving datasets and generate digital twins of actual driving scenarios.

This upleveling of driving datasets into more versatile simulation environments makes way for enhanced exploration of variables such as traffic conditions, lighting, and weather. These digital environments can then be further enriched by layering additional complexity defined by new objects or dynamic elements, without costly manual intervention. AVxcelerate Sensors software further applies electrical and optical properties to new objects for accurate simulations.

“All of these AI-based techniques are complementary to what our customers are currently doing with traditional simulation tools,” says Emmanuel Follin, senior manager, product management at Ansys, part of Synopsys. “To validate safety, you can’t just add assets like vehicles or pedestrians and define the behavior of each of them. Using NuRec, however, you can enrich the driving environment before running the simulation. As part of the data pipeline, data is curated and prepared so that engineers can reproduce road environments and real-life situations for day-to-day testing, ‘shifting left’ validation activities that are normally planned at later-stage.”

Scaling Diversity With NVIDIA Cosmos

Sometime in the near future, Ansys will provide users with yet another transformative approach to enriching simulation environments through generative variation using NVIDIA Cosmos with open world foundation models (WFMs). The tool uses style transfer techniques to modify existing digital scenarios. It allows engineering teams to introduce variations into their simulations that reflect a range of conditions like weather patterns, lighting changes, and regional differences.

Cosmos infuses test environments with greater variability and realism, further expanding the depth and breadth of possible scenarios that AV systems might encounter. It enables nuanced variation creation from a single base environment without extensive manual input.

Consider a daytime urban driving scene. With the help of Cosmos, it could easily be reimagined in situations involving greater visual complexity that might challenge AV perception systems — conditions like snow, rain, or fog, for example. Or, with the introduction of unexpected traffic behaviors.

All of these changes are driven by generative AI models that can simultaneously maintain contextually consistent details and introduce the diversity required to evaluate AV perception under a broad range of conditions. These enriched datasets can then be used for training and post-training processes to address gaps identified during controlled testing.

“Cosmos integrates into the broader AV development pipeline to ensure robust model performance,” says Follin. “By introducing diversity in training datasets, it reduces risk and improves reliability in more complex operational environments.”

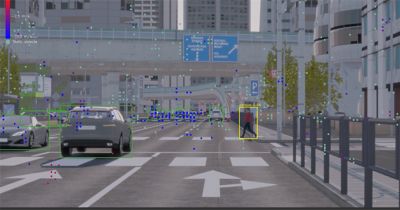

What AVxcelerate Software Does Best: Physics-Based Synthetic Sensor Generation

Sensors, whether optical cameras, radars, thermal cameras or lidars, are crucial to AV functionality. They deliver the input data to vehicular physical AI systems. AVxcelerate Sensors software provides the GPU-native, physically accurate sensor simulation needed for putting vehicle sensor perception to the test in a virtual environment. In the end, this intelligence gathering feeds more reliable AV system design.

“The integration of AVxcelerate Sensors’ physics-based synthetic sensor generation capabilities into Omniverse provides high-fidelity perception inputs while reducing road testing,” says Follin.

The following AVxcelerate Sensors features are integral to integration success:

- A light propagation engine models true light behavior to produce multispectral camera sensing (a technology that captures light across the electromagnetic spectrum). Recording these interactions under a variety of lighting and weather conditions, such as glare, reflections, and light scattering results in realistic image data that can be used to replicate challenges faced in a variety of real-world scenarios.

- Physics-based radar modeling enhances radar simulation realism by accurately replicating signal propagation and interactions with surrounding objects. It enables the precise emulation of radar behavior, further contextualizing it in terms of material properties, surface angles, environmental conditions, and other factors. Engineers can capture and optimize sensor performance across a range of situations, including rare edge cases.

- A coherent simulation process in AVxcelerate Sensors software delivers a robust way of generating high-quality sensor data. The software can be used to simulate cameras, radar, and other perception devices with physics-driven accuracy. And it ensures that the inputs used for development and validation closely align with the real world, enabling engineers to anticipate and resolve potential challenges earlier in the design process while avoiding a simulation-to-reality gap.

Putting It All Together in One Unified Workflow

From 40,000 feet, the AI-powered Ansys AVxcelerate simulation workflow looks like this.

The workflow leverages a systematic integration of advanced tools to transition real-world driving data into simulation-ready environments.

It begins with the real driving dataset collection captured through vehicle sensors and recording systems. NVIDIA Omniverse NuRec is used to transform the raw recordings into detailed digital data environments capturing physical layout, textures, and dynamic elements of real-world scenarios.

The recreated environment is then prepared within the Omniverse libraries. Engineers can fine-tune the environment by applying detailed properties to objects and surfaces that reflect the behavior of light, materials, and sensor interactions, infusing a high degree of customization and precision into testing scenarios and supporting more robust AVxcelerate Sensors simulations.

Cosmos extends the scope of this testing via the introduction of generative variations that dynamically modify existing digital environments to reflect a wider range of scenarios. It gives engineers access to an expanded dataset incorporating additional multiple, regional, temporal, and atmospheric conditions without requiring additional manual input to create diverse simulation environments.

“Every stage, from data collection to environment enrichment, contributes to a robust testing platform capable of addressing a wide range of operational challenges,” says Follin. “It provides a path for refining AV systems through iterative testing using both real-world fidelity and synthetic diversity.”

Ansys Takes the Driver’s Seat During AV Development

The integration of advanced, AI-based technologies like NVIDIA Omniverse NuRec and Cosmos with Ansys AVxcelerate simulation is redefining the entire AV development process. This creative synergy will enable automotive engineers to address long-standing industry challenges, including limited access to diverse testing conditions and the exorbitant cost of physical trials.

By combining synthetic data generation and advanced environmental modeling, it’s possible to put vehicle systems to the test under conditions that might otherwise be difficult or impractical to replicate with a level of control and repeatability that enhances validation efforts. The ability to introduce variations, such as changing lighting angles or adjusting traffic densities, ensures that autonomous systems of the future are not only robust, but also adaptive to nuanced environmental changes.

To learn more about AVxcelerate software, watch the webinar Ansys 2026 R1: Ansys Autonomous Vehicle Simulation What’s New.

Just for you. We have some additional resources you may enjoy.

“The integration of AVxcelerate Sensors’ physics-based synthetic sensor generation capabilities into Omniverse provides high-fidelity perception inputs while reducing road testing.”

—Emmanuel Follin, senior manager, product management at Ansys, part of Synopsys

The Advantage Blog

The Ansys Advantage blog, featuring contributions from Ansys and other technology experts, keeps you updated on how Ansys simulation is powering innovation that drives human advancement.